Calibration Intervals

Calibration intervals are often treated as fixed lengths of time rather than data-driven, flexible durations. For organizations that rely on equipment and its calibrations, the calibration interval should be determined through a structured evaluation of risk, historical performance, and actual usage conditions. Defaulting solely to manufacturer recommendations is a common starting point, but it is rarely sufficient for maintaining optimal accuracy or cost efficiency over time.

This article outlines a practical framework for establishing calibration intervals by combining manufacturer guidance, historical calibration data, and usage-based indicators. It also addresses the role of preventive maintenance as a complementary control that extends equipment life and stabilizes measurement performance.

Manufacturer Recommendations for a Baseline

Manufacturer recommendations provide an initial reference point for calibration intervals. These recommendations are typically derived from controlled testing conditions and generalized assumptions about instrument stability and usage patterns. For new equipment with no performance history, they serve as a reasonable starting position.

However, several limitations must be recognized. Manufacturer intervals do not account for the specific environmental conditions in which the equipment operates, such as temperature variability, humidity, vibration, or exposure to contaminants. They also do not reflect differences in handling practices, operator variability, or workload intensity. As a result, strict adherence to manufacturer intervals can lead to over-calibration in low-risk scenarios or to under-calibration in high-use or stress environments.

A more effective approach is to adopt the manufacturer’s interval as an initial setting and then adjust it based on observed performance and usage. This ensures that the interval remains aligned with actual instrument behavior rather than theoretical expectations. For regulated environments, documenting this is essential to demonstrate that interval decisions are technically justified and not arbitrary.

Using Historical Calibration Data

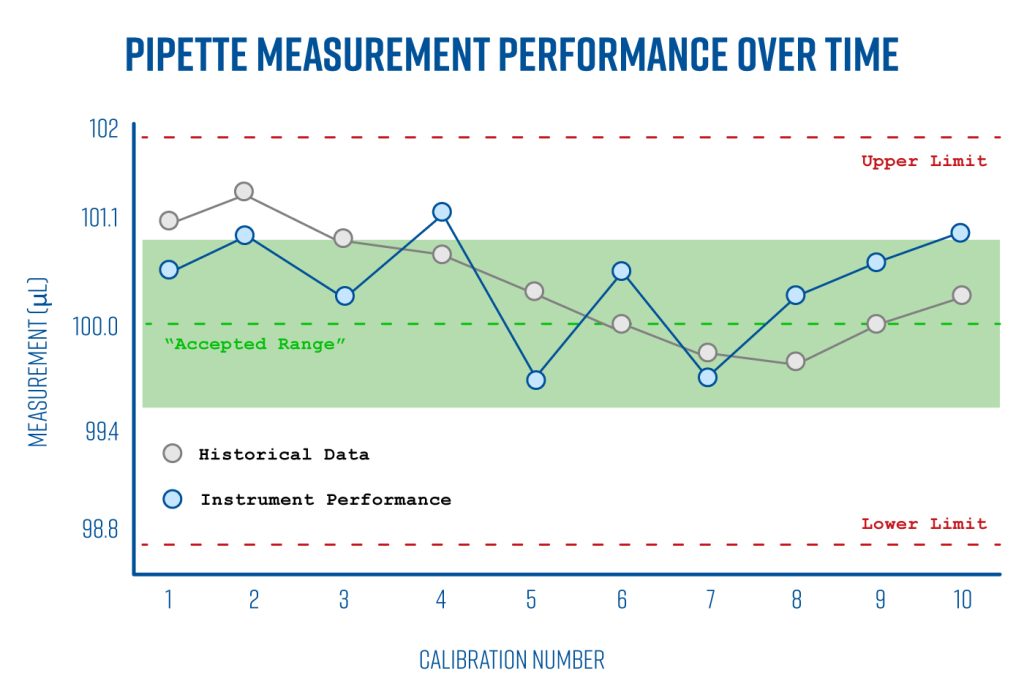

Historical calibration data is the most direct and defensible basis for adjusting calibration intervals. It provides evidence of how an instrument performs over time and under actual operating conditions. Key parameters to evaluate include measurement error, drift trends, as-found conditions, and the frequency of out-of-tolerance results.

A structured analysis of calibration records should address the following:

If an instrument consistently demonstrates stable performance with minimal drift and no out-of-tolerance findings, the calibration interval may be extended incrementally. Extensions should be conservative and supported by multiple calibration cycles to ensure that the trend is reliable. Conversely, if data shows increasing drift, frequent adjustments, or any out-of-tolerance conditions, the interval should be reduced to mitigate risk.

Statistical methods can be applied to strengthen interval decisions. For example, control charting of calibration results can identify trends and variability over time. Measurement uncertainty analysis can also be used to determine how closely the instrument operates within its tolerance limits.

If you need help determining your calibration intervals, Bio Calibration Company can help. Contact us to speak with an expert today.

Usage-Based Interval Determination

Equipment usage is a critical but often underutilized factor in setting calibration intervals. Instruments that are used frequently or under demanding conditions are more likely to experience wear, drift, and degradation. Conversely, equipment with limited or intermittent use may maintain stability over longer periods.

Usage can be characterized in several ways:

For example, pipettes used for viscous or corrosive liquids may require more frequent calibration and maintenance due to increased mechanical stress and potential contamination.

Organizations can implement usage tracking systems to support interval decisions. This may include simple logs, automated counters, or integration with laboratory information systems. Over time, this data can be correlated with calibration results to identify relationships between usage intensity and performance degradation.

A practical approach is to define usage categories, such as low, moderate, and high usage, and use this as supplementary data or additional data to help make decisions about calibration intervals.

Risk-Based Considerations

Calibration interval decisions should also incorporate a risk-based perspective. The criticality of the measurement, the potential impact of inaccurate results, and regulatory requirements all influence the conservatism of the interval.

For high-risk applications, such as those affecting product quality, patient safety, or regulatory compliance, shorter intervals are generally justified even if historical data suggests stability. In lower-risk applications, longer intervals may be acceptable if performance data supports the decision.

Risk assessment should consider:

Documenting this assessment ensures that interval decisions are transparent and defensible during audits or customer reviews.

Preventive Maintenance and Its Impact on Calibration

Preventive maintenance is a critical component of any calibration strategy. While calibration verifies and adjusts measurement accuracy at specific points in time, maintenance addresses the equipment’s underlying condition to prevent degradation between calibrations.

Maintenance intervals should be aligned with both usage and environmental conditions. High usage or harsh environments typically require more frequent maintenance. Integrating maintenance records with calibration data provides a more complete picture of equipment performance and supports more accurate interval decisions.

It is also important to ensure that maintenance activities are performed by qualified personnel using appropriate procedures and tools. Improper maintenance can introduce additional variability or damage the equipment, negating its intended benefits.

Conclusion

Calibration intervals should not be static or based solely on external recommendations. A data-driven approach that incorporates historical performance, actual usage, and risk considerations provides a more accurate and cost-effective way to maintain measurement reliability. Preventive maintenance further strengthens this approach by addressing the root causes of performance degradation.

Organizations that implement these practices can expect improved measurement confidence, reduced risk of nonconforming results, and more efficient use of calibration resources. The result is a calibration program that is both technically sound and aligned with operational realities.

If you need help creating a Preventive Maintenance Program and Calibration program tailored to your needs, please reach out to us. One of our experts can help you determine the right calibration and PM plan for your equipment.